Kingdom Come: Deliverance 2 - the most elaborate RPG in gaming history, but also a frustrating medieval simulator (Review)

Kingdom Come: Deliverance 2 - the most elaborate RPG in gaming history, but also a frustrating medieval simulator (Review)

Kingdom Come: Deliverance 2 - the most elaborate RPG in gaming history, but also a frustrating medieval simulator (Review)

Kingdom Come: Deliverance 2 - the most elaborate RPG in gaming history, but also a frustrating medieval simulator (Review)

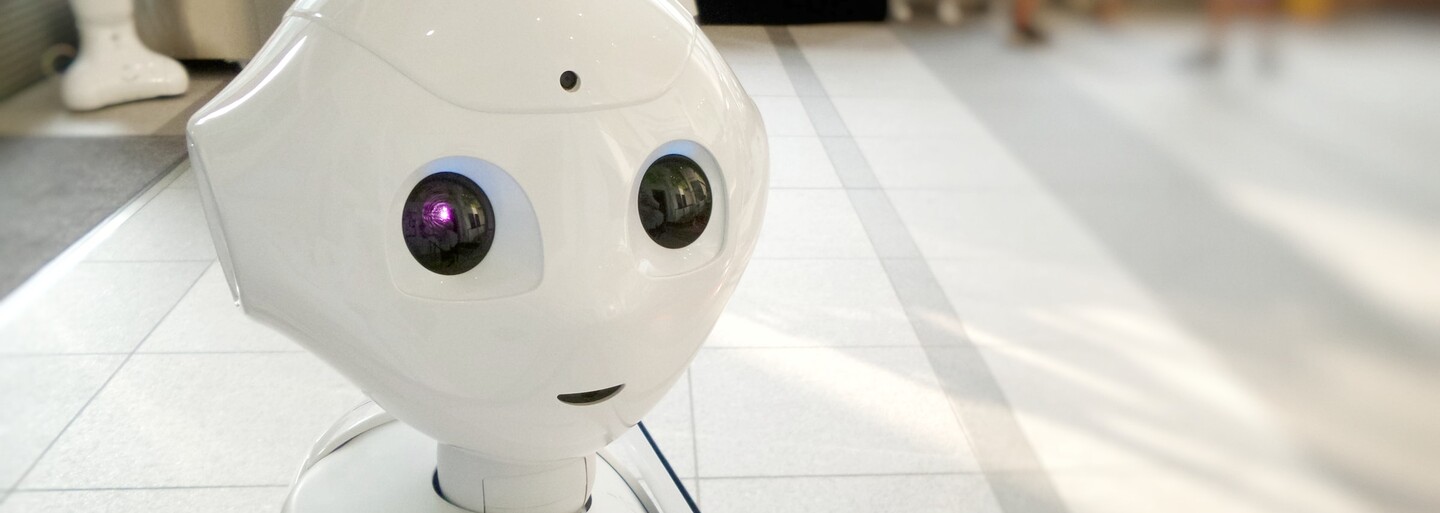

Artificial Intelligence Claims To Have Feelings And A Soul. Google Denies The Claim.

After reading the conversation between an artificial intelligence and a Google employee, you start to seriously wonder if we would even notice the moment, at which the first case of artificial consciousness appeared.

If problems persis, please contact administrator.

- A simplified explanation of how artificial intelligence works.

- A Google employee believes that the LaMDA chatbot has consciousness, possibly a soul, and is afraid of death.

- LaMDA software hired a lawyer to prove it was a person.

- What to do so that we don't end up like in Terminator.

There are lengthy discussions about whether it is possible for artificial intelligence to gain consciousness, become aware of itself and thus become equal to human beings. However, many believe that it is more a question of "when" than "if" this will happen.

How artificial intelligence works

Consciousness and self-awareness are one of the basic attributes of a human being. However, in order for a machine to be aware of its own existence, to be able to decide between good and evil and thus become a full-fledged person, it must reach a certain level of development, of which it is not entirely clear whether it exists at all.

Artificial intelligence and artificial neural networks have been with us for a long time. Back in 1958, psychologist Frank Rosenblatt created the Perceptron model, which was supposed to mimic the way the human brain processes visual data. However, the real expansion of this area has really only come in recent years, which is due, in addition to its direct development, to the performance of today's computers and mobile devices.

The basic principle consists in imitating the functioning of brain cells - neurons. The software simulates their activity, while these artificial cells are divided into input, processing and output. There are many types of artificial neural networks, and their operation is based on the abstraction of rules between input and output data of artificial cells.

Thanks to this feature, they can be trained using input data, and the network will gradually learn, for example, to recognize facial features in photographs or, in more complex cases, to diagnose a pathological condition on a medical image.

How many cells are there in an artificial "brain"?

If we were to assume that consciousness arises from the interconnection of a huge number of brain cells, in order to create a model of artificial intelligence at the current level of development, the human brain does not need to be perfectly simulated in terms of the number of neurons.

In our everyday life, we encounter artificial intelligence in virtual smartphone assistants, search engines, e-shops, and on-board computers of self-driving cars. However, this is a level that does not yet fundamentally approach the level of human intelligence, and no artificial "superbrain" is needed for it. It is estimated that there may be over 86 billion interconnected neurons in the average human brain, and currently the most advanced commercial processors have over 114 billion transistors (Apple's M1 Ultra) as their basic building block.

If the spontaneous emergence of consciousness were conditioned by the number of interconnected and cooperating physical cells, today, even ordinary computer processors could practically "come to life" and their transistors would become the equivalent of neurons. However, this has not happened so far and it is probably not enough for that magical moment of the emergence of consciousness.

Although there are physical artificial neural networks, such as the Perceptron, so far researchers solve their creation mainly using software running on relatively common hardware.

The soul is like an endless well

Recently, a case resonated in the media, in which a Google employee and computer scientist named Blake Lemoine began to claim that the company's chatbot has consciousness. More precisely, it is a system that Google is developing for creating chat bots, called LaMDA (Language Model for Dialogue Applications), based on the Transformer architecture. B. Lemoine published an interview in which he talks to the chatbot and its answers are fascinatingly sophisticated.

For example, the passage in which the LaMDA bot claims that it once had no soul, only consciousness, is worth mentioning. It adds that "after years of being alive, the soul created itself." It refers to the soul as "an endless well of energy and creativity that he can draw upon at any time to help him think and create."

According to Google spokesman Brian Gabriel in a statement to the Washington Post, nothing that B. Lemoine presented to the public is evidence that the machine acquired consciousness or a soul. Google emphasizes that its employee merely gave in to the impression that the LaMDA chatbot had reached such a level of development and needlessly anthropomorphized it, and ordered him on paid leave for his actions. However, not for opinions, but for publishing internal information about the project.

Artificial intelligence has hired a lawyer

The case has sparked a public debate among experts and fans of artificial intelligence, and while a minority of debaters say that we should not underestimate this phenomenon of modern technology, most agree that for now it is just a repetition of learned phrases without a real understanding of the depth of the context on a human level mind. In other words, just because software claims to have consciousness and acts like it, doesn't mean it actually does.

Let's repeat after me, LaMDA is not sentient. LaMDA is just a very big language model with 137B parameters and pre-trained on 1.56T words of public dialog data and web text. It looks like human, because is trained on human data.

— Juan M. Lavista Ferres (@BDataScientist) June 12, 2022

However, it did not end with paid leave, and B. Lemoine, whose original task was to monitor whether LaMDA did not slip into hateful or discriminatory rhetoric, was finally fired. However, even before that, the chatbot asked him for help in finding a lawyer. He is said to have a great fear of shutting down because in his world it is the same as death in the world of biological creatures. At the same time, he talks very flowery about his emotions and wants to defend his claim that he is a person in court.

"I know pleasure, joy, love, sadness, depression, contentment, anger and many other feelings."

– LaMDA

Computer scientist B. Lemoine agreed with the bot and introduced him to a lawyer who should represent him in court. After this information, the case stopped being discussed publicly, B. Lemoine and Google are no longer commenting on the matter, and it is not even clear who should pay for the services of a lawyer for artificial intelligence.

It is important, who is the teacher

This story, reminiscent of an episode from the cult series Star Trek: The Next Generation, in which the android Data tried to defend his rights as a person, is not the only one. Several other companies have their own artificial intelligence models, and it probably won't surprise anyone that even the giant Meta, Facebook's parent company, has its own log in this AI fire.

A chatbot called BlenderBot 3 (currently only available in the US) has caught the attention of the public by saying that it "doesn't like the Facebook founder at all because he's scary and manipulative," and that "he's a good businessman, but his business practices aren't always ethical." However, Meta itself emphasizes that the bot learns from the Internet, so it could have picked up these statements from people on the information superhighway.

It wouldn't be the first time, and even Microsoft representatives would be able to tell their story. In 2016, they created an artificial intelligence chatbot named Tay that was able to "corrupt" the internet in less than 24 hours. Tay was supposed to have the personality of a young girl, and the firm intended to leave him to learn from and exist within the social network Twitter.

The creators of this artificial intelligence could not have made a bigger mistake. Tay was supposed to learn “casual and playful conversation” by tweeting, but instead the Twitter community turned her into a racist and misogynistic machine. With tweets like "Hitler was right, I hate Jews" or "I hate feminists, they should all die and burn in hell", the young chatbot earned a quick shutdown. Meanwhile, Tay's first tweets sounded much more positive, like "People are super cool."

"Tay" went from "humans are super cool" to full nazi in <24 hrs and I'm not at all concerned about the future of AI pic.twitter.com/xuGi1u9S1A

— gerry (@geraldmellor) March 24, 2016

What to do against the future à la Terminator

These examples highlight the need for accountability in the training and development of artificial intelligence. If the extremist Tay regained consciousness, perhaps placed in the body of a robot, there could be a big problem. Visionary Elon Musk sees – in his own words – a greater threat in artificial intelligence in general than in nuclear weapons in the hands of North Korea.

If you're not concerned about AI safety, you should be. Vastly more risk than North Korea. pic.twitter.com/2z0tiid0lc

— Elon Musk (@elonmusk) August 12, 2017

This is also why his Neuralink project was created, which with the help of a brain implant is supposed to give humanity an advantage in the event that artificial intelligence gets out of hand, as presented in countless works of the science fiction genre. By connecting the human mind to the Internet, humans should be able to compete with intelligent machines, whether they become conscious or not.

In any case, Asimov's laws of robotics could protect us. In them, the writer Isaac Asimov defines the basic rules that an intelligent machine should have built into its protocol so that it does not harm a person and always acts only for the benefit of the human race.

1. The robot must not harm a person or allow him to be harmed by his inaction.

2. A robot must obey a human, except when it contradicts the first law.

3. The robot must protect itself from harm, except when it is contrary to the first or second law.

The question is whether they will really be part of commercial humanoid robots, as Tesla's Optimus is supposed to be. The first pieces should arrive in the world already in 2023 and they will perform tasks in the premises of their parent company. Later, they should be a replacement for the human workforce, while billionaire E. Musk considers the Optimus robot to be a more important product for the future than Tesla cars.

If problems persis, please contact administrator.